Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

NetSecGame v0.2.0 - Reproducible Experiments and a More Robust Game Server

NetSecGame v0.2.0 is here. This release focuses on what matters most for reproducible AI research: deterministic episode control, a more robust simulation server, and a significantly expanded test suite. Whether you are running large-scale RL experiments or debugging a new agent, v0.2.0 makes the process more reliable.

New Slips version v1.1.19 is here!

Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

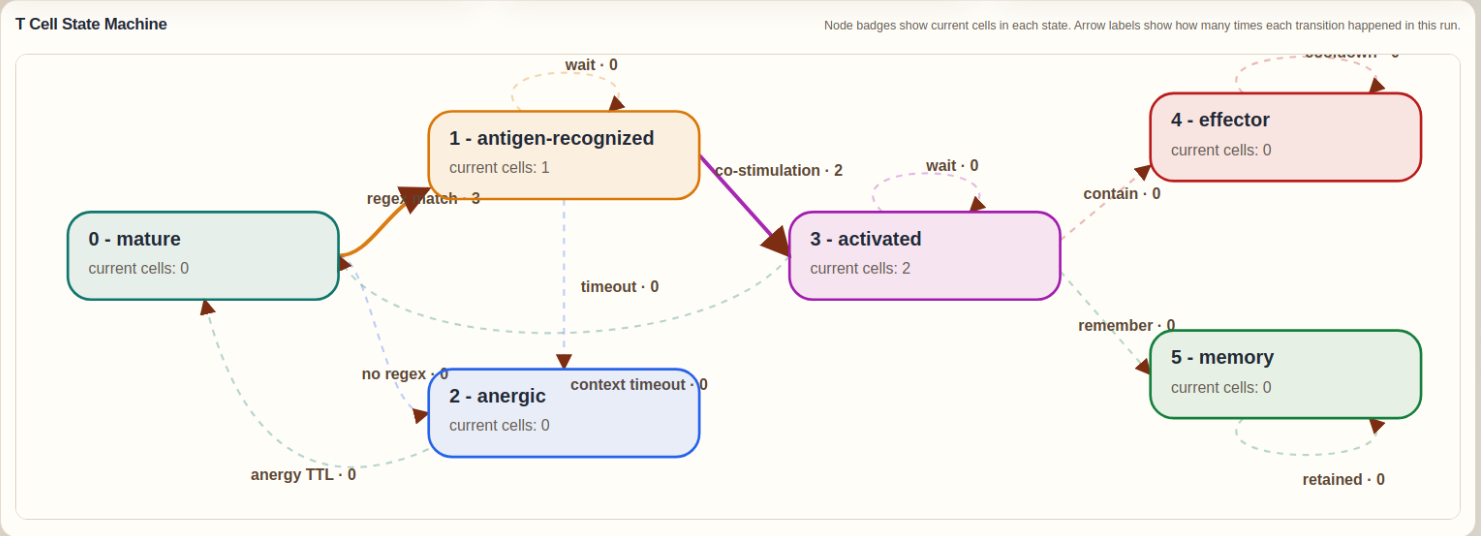

Adaptive Response in Slips IDS as Immune T Cells

The T Cell module was created to give Slips a stateful adaptive response layer on top of its existing evidence pipeline. While the original detectors already provide the innate immune component through PAMP and DAMP evidence, the T Cell module adds antigen recognition, co-stimulation, context evaluation, tolerance, activation, effector action, and memory. It does this by extracting structured antigens from live evidence, matching them against the accepted regex repertoire generated by RegexGenerator, and then combining that recognition with the cumulative danger signaled by recent PAMP and DAMP observations. This allows Slips to move from isolated detections to a more explicit immune decision process that can decide when to ignore, when to contain, and when to remember.

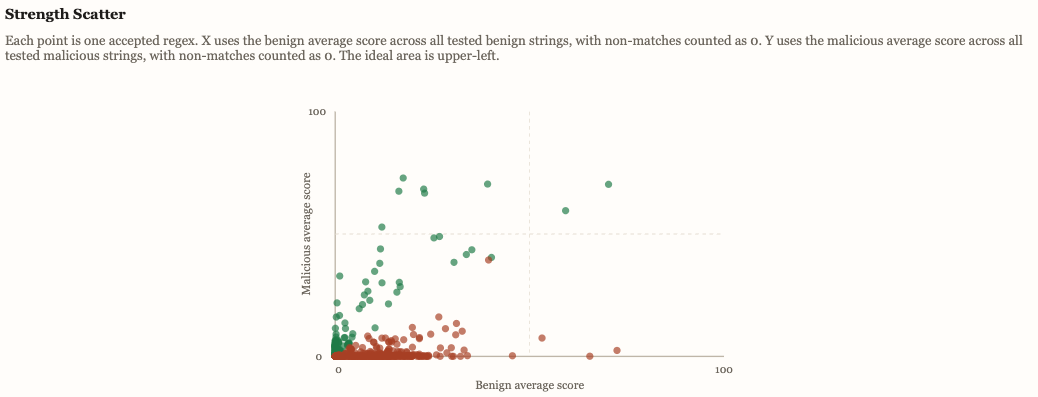

Adapting Detections in Slips with Immune Pseudo-Generated Regexes

The RegexGenerator module was created to give Slips an adaptive way to discover new string-based detectors for changing indicators such as domains, URIs, filenames, TLS SNI values, and certificate common names. It continuously uses the shared LLM service to propose one regex at a time, then applies local validation and negative selection against benign corpora to reject unsafe or overly broad patterns. The accepted regexes become a reusable adaptive recognition repertoire for other modules, especially the T Cell responder.

New Slips version v1.1.18 is here!

Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

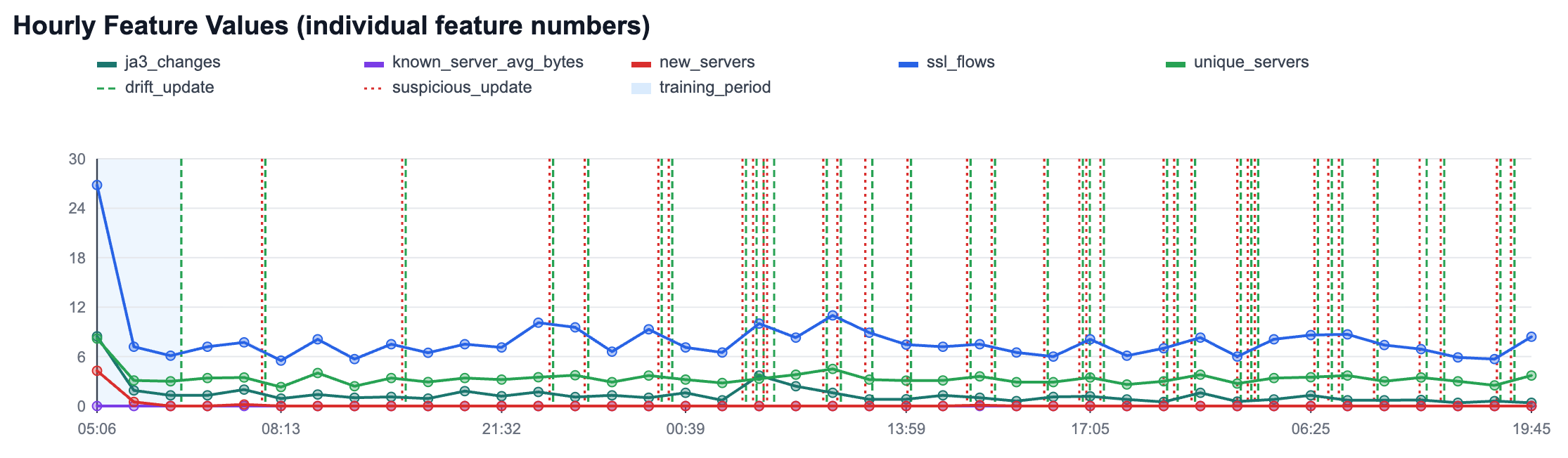

HTTPS Anomaly Detection in Slips: Adaptive Baselines for Real Traffic

The new HTTPS anomaly detection module in Slips builds per-host adaptive baselines in traffic time, then detects deviations at two levels: per-flow (for bytes to known servers) and per-hour (for host behavior like new servers, unique servers, JA3 changes, and flow volume). It uses online statistics and z-scores for transparent scoring, plus controlled adaptation states (training_fit, drift_update, suspicious_update) to keep learning while reducing poisoning risk.

The result is explainable, operational evidence in clear human text: what changed, confidence, and why it is anomalous.

Rethinking Cybersecurity Defense: Principles from Biological Immunity

Our research identifies sixteen fundamental principles of biological immunity and translates them into cybersecurity defense architectures that emphasize multi-dimensional coordination over single- point tactics.

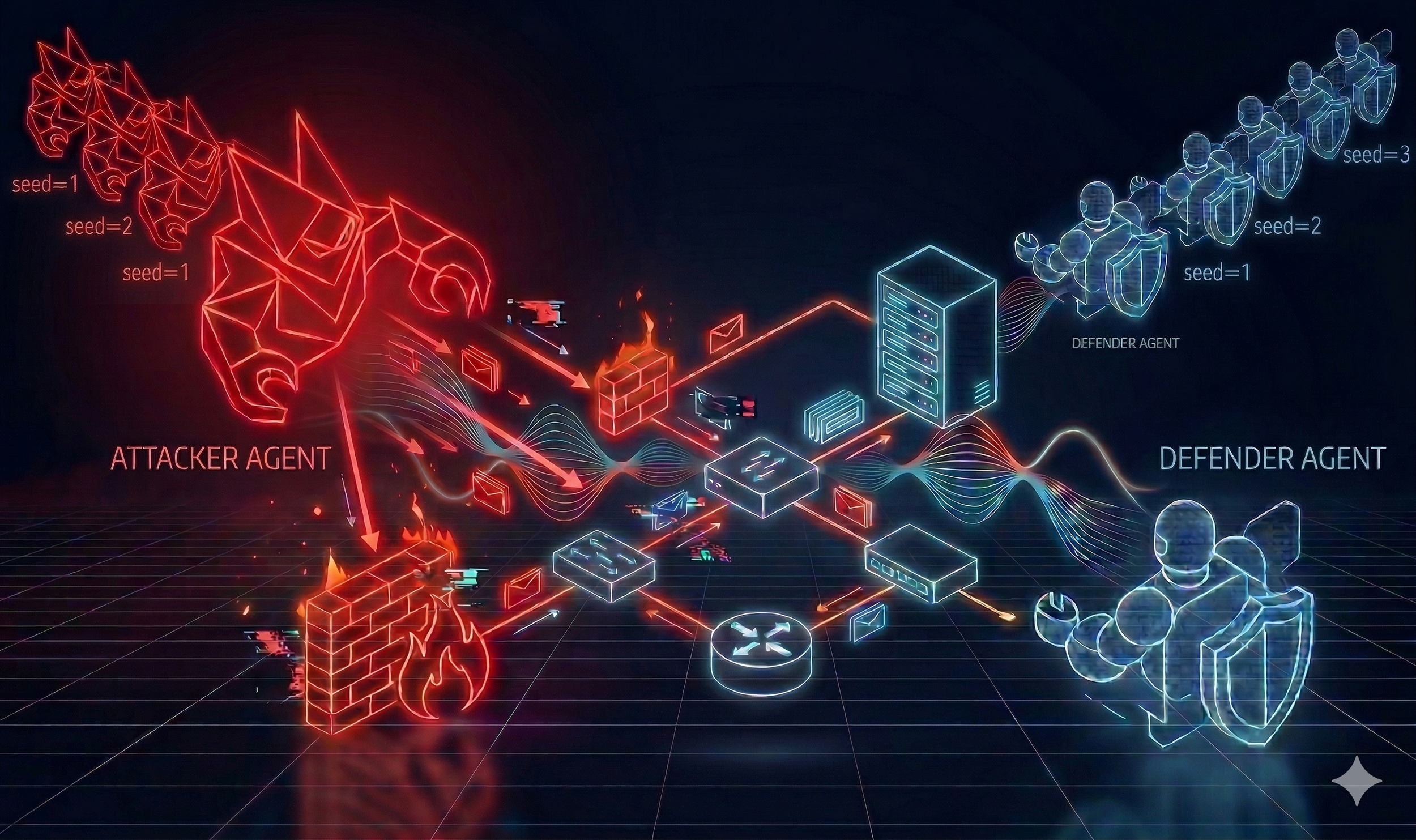

NetSecGame - A Framework for Training and Evaluating AI Agents in Network Security Environments

We are excited to announce the release of NetSecGame (NSG) v0.1.0, a framework for training and evaluating AI agents in network security environments. Developed at the Stratosphere Laboratory at CTU in Prague, NSG provides a highly configurable testbed for both offensive and defensive security tasks.

New Slips version v1.1.17 is here!

Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

New Slips version v1.1.16 is here!

Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

New Slips version v1.1.15 is here!

Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

AI Attackers in Your Pocket: How Small Language Models Can Outsmart Cyber Defenses

We are pleased to announce the publication of our latest paper, “Building adaptive and transparent cyber agents with local language models,” in the Journal of Expert Systems with Applications.

New Slips version v1.1.14 is here!

Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

New Slips version v1.1.13 is here!

Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

New Slips version v1.1.12 is here!

Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

New Slips version v1.1.11 is here!

Our team is excited to share the latest news and features of Slips, our behavioral-based machine learning intrusion detection system.

How Well Do LLMs Perform on a Raspberry Pi 5?

Can a Raspberry Pi 5 run Large Language Models? In this post, we share the results of our experiments, analyzing how LLMs perform on this low-cost hardware and exploring the challenges and performance trade-offs.

Guest Post: A Graph-Based Approach to Cyber Threat Intelligence

A university project turned into a powerful tool: Rocío Baggio and Diego Forni’s graph-based system connects malicious IPs, attack techniques, and threat actors—giving cybersecurity analysts clearer insights into the ever-evolving threat landscape.

Exploring LLMs for Cybersecurity: Our ICAART 2024 Extension Paper

We’re excited to share our new ICAART extension paper, published in the Lecture Notes in Artificial Intelligence series. The paper explores how Large Language Models (LLMs) can be leveraged as agents for network security testing, outperforming traditional reinforcement learning methods in several scenarios. This research, including the introduction of our new NetSecGame environment, demonstrates the promise of LLMs in cybersecurity applications.